As of 2017, there were over 2.8 Million apps available on the Apple app store and close to that number on the Google play store. This is an uncanny number of applications, most of which are unlikely to ever be parsed and decoded by existing forensic tools.

How do we fill that gap?

As I’m sure we are all aware, common mobile forensic tools tend to parse applications pretty well but as with any forensic tool, these should always be validated. Tools are from time to time known to get things wrong or miss things.

At the start of our case, in order to not get bogged down, we should carefully consider the questions we aim to answer. Also, if the method of dumping a handset and relying on decoded data from our tool is going to answer these questions.

I thought I would try to identify some artefacts which exist in common apps which might help answer some attribution questions an analyst may be faced with.

The age-old question of ‘putting bums on seats’ or in our instance, ‘hands on the mobile device’ is often the aim for prosecution cases. Digging through some common application plist and database files should hopefully help show some of this type of activity.

Let’s take a look…

#Instagood

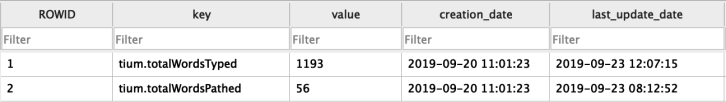

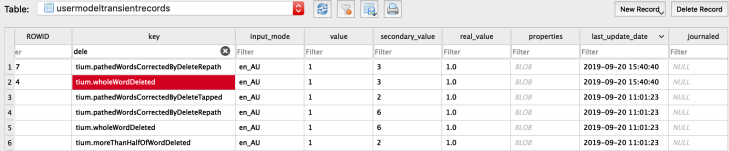

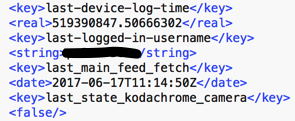

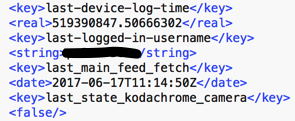

com.burbn.instagram.plist

As you can see we have a timestamp which shows the Instagram swipe down or page reload function.

Great for proving your kids were on Instagram when they said they were asleep.

#Earworms

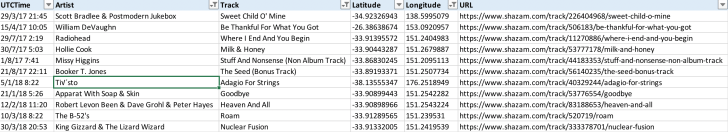

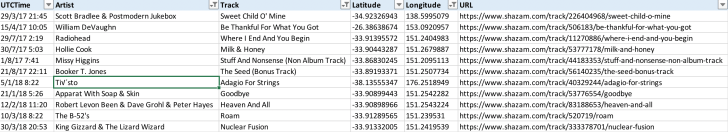

ShazamDataModel.sqlite

Shazam provides users with the ability to identify music while on the go, great for those wriggly little earworms. Although, what you may not know is that Shazam is recording your location and times every time you Shazam a track. The format of this database table doesn’t appear to have changed over the last two years.

Highly useful for showing user interaction and bad taste in music…

#SIMS

CellularUsage.db

Apple does quite a good job of preserving the list of SIM card ICCID’s which have been present in an iPhone and sometimes you may also see the phone number (MSISDN) which has been saved on that SIM card. This varies between providers and may not always be present.

The field storing the phone number (MSISDN) on a SIM is editable so not 100% reliable, although I have never seen it being modified in recent years.

Last Known ICCID and telephone number can also be seen in the com.apple.commcenter.plist file.

#locationlocationlocation

CallHistory.Storedata

Perhaps not all that useful for cases where your device has always been in the same country but if you’re investigating someone who tends to travel, it would be nice to know where they were when they calls were made. Again, with a simple SQL query, you can pull out this information.

#layouts

iconstate.plist

If you are interested in identifying how the UI looked then you will notice that you can identify the custom strings, including emojis as headers for the groups of app icons on iOS. In our iconstate.plist file these can be read from left to right, top to bottom to visualise the layout of the apps and second panes of apps are nested in another array, as can be seen in the example below.

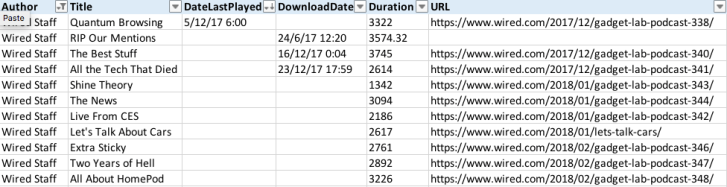

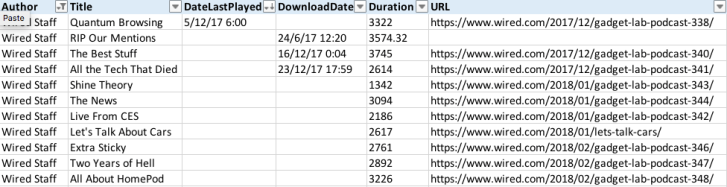

#podcasts

MTLibrary.sqlite

According to FastCompany “In March 2018, Apple Podcasts passed 50 billion all-time episode downloads and streams.” Thankfully for us, Podcast episodes are all tracked in a database stored on the iPhone and there’s useful information in here about when the podcast was downloaded to the device and also when it was last played.

Showing when a podcast was last played could go some way to corroborating whether or not someone was listening to their headphones when they should have been listening to their college lecturer.

If you don’t listen to the Wired UK Podcasts, then it’s definitely worth a pop.

Hopefully, this quick write up on some iOS artefacts will help someone out. I believe these may be of some assistance along the way to attributing usage and location of devices at very specific times. I’m sure the commercial tools out there already extract some of this information but an analyst should be able to parse this information themselves. Identifying app data that a tool may have missed and ensuring your evidence is verified as accurate should be paramount.